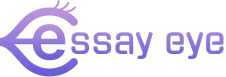

Why Institutions Begin the Search for an AI Essay Grader

Most colleges and universities do not set out to automate grading. Instead, they usually start looking for an AI essay grader because of certain challenges, such as:

- Grade appeals that expose inconsistent scoring

- Program reviews requiring measurable learning outcomes

- Faculty fatigue from high-volume, repetitive evaluations

At this point, leaders are not searching for easy solutions; they are focused on ensuring that grading upholds stability, fairness, and consistency across the institution.

The First Gatekeeper Question: Can the System Apply Our Rubric?

Nothing is more important than making sure the system follows the rubric closely. For example, if the rubric requires specific feedback on thesis clarity, the AI must be able to determine whether the essay’s thesis statement is clear, well-defined, and aligned with disciplinary expectations. The system should explain how it recognizes and evaluates the thesis statement, using language and criteria directly from the department’s rubric. This kind of transparent alignment reassures faculty that AI scoring is rooted in their standards, not generic benchmarks.

If an automated essay grader cannot correctly use the department’s language, learning goals, and performance standards, the process stops immediately.

Universities expect:

- Customizable rubric criteria

- Discipline-specific interpretation

- Alignment across sections and semesters

- Repeatable scoring logic

- Clear alignment to institutional learning outcomes, with direct mapping to familiar accreditation standards such as MSCHE Standard V (Educational Effectiveness Assessment),

Modern essay-grading software should act as part of the academic system, not just offer opinions. It needs to support the standards set by faculty, not change them.

Immediate Disqualifiers in Committee Review

Evaluation committees act fast when they see certain risks. They usually reject systems if they:

- Generate new grading criteria.

- Change scoring logic without transparency.

- Use opaque or unexplainable models.

- Override instructor authority

- Provide scores without traceable justification.

Institutions keep control over judgment. What they do is make the grading process more consistent.

Faculty must always have the main authority in any AI-supported essay grading system.

How Universities Evaluate AI Essay Grader Platforms

After ensuring the system aligns with the rubric, committees consider other important factors for the institution.

1. Transparency

Can instructors see exactly how feedback connects to rubric categories?

Is there traceable reasoning for each score?

Is there traceable reasoning for each score?

2. Control

Do faculty retain final authority over grades?

Can they adjust or override AI suggestions?

Can they adjust or override AI suggestions?

3. Documentation

Can reports support accreditation reviews, assessment audits, and curriculum mapping?

Is scoring data exportable and archivable?

Is scoring data exportable and archivable?

4. Scalability

Can the platform function reliably across hundreds or thousands of submissions?

Does it maintain scoring consistency over time?

Does it maintain scoring consistency over time?

A trustworthy online essay grader for teachers must meet all four of these requirements simultaneously.

Where Many AI Tools Fall Short

Many AI tools are built for speed rather than to ensure their results can be defended.

These tools might work well as writing helpers for students, but schools need something very different for grading.

Universities are not generating text.

They are evaluating learning.

They are evaluating learning.

That requires:

- Traceability

- Consistency

- Repeatability

- Outcome alignment

- Governance control

If these safeguards are missing, schools will not move forward with adoption, no matter how advanced the technology is.

The Typical Institutional Adoption Process

Even though every campus has its own decision-making process, the adoption process usually follows a similar pattern.

Step 1 – Pilot in a High-Enrollment Course

Common starting points include first-year composition, general education writing, or writing-intensive programs.

Step 2 – Human vs. AI Agreement Analysis

Departments compare rubric scoring consistency between instructors and the AI system.

Step 3 – Feedback Turnaround Measurement

Faster structured feedback often correlates with improved student revision cycles.

Step 4 – Reporting Evaluation

Assessment committees test whether outputs support accreditation standards and learning outcome reporting.

Step 5 – Gradual Expansion

Adoption grows one department at a time, rather than happening across the whole institution all at once.

Taking this step-by-step approach helps lower risk and build trust within the institution.

Institutional Risk Management: What Decision-Makers Prioritize

Provosts, deans, and assessment directors evaluate AI through the lens of institutional risk.

They seek assurance that:

- Faculty maintains grading authority.

- Students are not receiving unauthorized generative assistance.

- Outcomes can withstand public scrutiny.

- Data integrity is preserved.

- Academic policies remain enforceable.

A well-designed AI essay-scoring platform should strengthen governance, not weaken it.

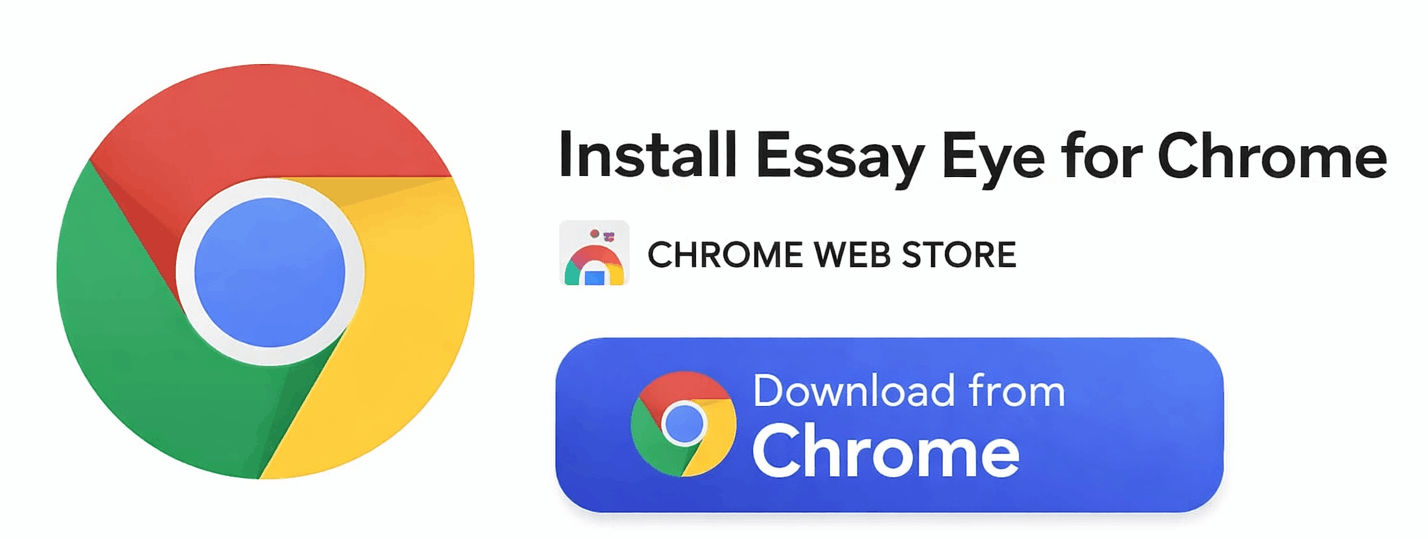

Can AI Improve Writing Without Writing for Students?

Yes, when the system focuses on structured evaluation rather than content generation.

An essay grading assistant online can:

- Deliver criterion-based observations

- Identify performance patterns across cohorts.

- Highlight recurring weaknesses

- Support targeted revision

When used correctly, AI can help improve students’ essays without taking away their own work.

This difference is very important for a school’s reputation.

Budget Considerations and Institutional ROI

Universities evaluate investment differently from individual instructors.

Cost is compared against institutional outcomes such as:

- Reduced grading variability

- Fewer grade disputes

- Faster revision cycles

- Improved student retention

- Stronger accreditation evidence

- Data-driven curriculum refinement

Seen this way, an online essay assessment tool is part of the school’s quality assurance system, not just something bought for convenience.

Beyond Higher Education

While adoption often begins in universities, structured AI evaluation extends naturally into:

- Dual-enrollment programs

- Advanced secondary education

- International preparatory programs

In these settings, platforms can also be used as high school essay graders, where it is just as important to maintain consistent grading across different classes.

The need for standardized evaluation follows the learner.

The Questions Every Committee Eventually Asks

Before approving implementation, decision-makers typically require clear answers to:

- How are rubric criteria technically applied?

- Who retains final grading authority?

- What documentation exists for audit review?

- How does this system compare to manual variability rates?

- Can implementation scale responsibly?

- What safeguards protect academic integrity?

If a platform cannot answer these questions clearly and openly, it will not move past the pilot phase.

Why Some Institutions Choose Essay Eye

Essay Eye was designed specifically for structured academic evaluation rather than adapted from a generative AI framework.

It operates as:

- An interactive essay grader

- Structured essay analysis software

- A defensible automated feedback system

- A faculty-controlled evaluation environment

Its purpose is not to take the place of academic judgment, but to support it by adding consistency and clear records.

Final Perspective: Adoption Is Ultimately About Trust

Universities take careful steps because they are responsible for protecting academic standards.

They are accountable to:

- Students

- Faculty

- Accrediting bodies

- Regulatory agencies

- The public

The right AI system does not diminish academic standards.

It helps schools demonstrate that they apply their standards consistently, openly, and fairly. In essay assessment, adoption is not about speed.

It is really about building stability, strong oversight, and trust.

It helps schools demonstrate that they apply their standards consistently, openly, and fairly. In essay assessment, adoption is not about speed.

It is really about building stability, strong oversight, and trust.

See Essay Eye in Action

Want to see how structured AI essay scoring works inside your rubric framework?

👉 Request a Product Demo

Explore how Essay Eye applies department rubrics, generates criterion-based feedback, and supports defensible academic evaluation.

Schedule a Demo:

Install the Essay Eye Chrome Extension

For streamlined workflow integration, Essay Eye is also available as a Chrome extension, allowing faculty to evaluate essays directly within supported learning platforms.

Download the Chrome App: